Art World

Artificial Intelligence Is Revealing Secrets About How the Ghent Altarpiece Was Made—and Damaged

Artificial intelligence is helping researchers decode x-rays of the two-sided panels.

Artificial intelligence is helping researchers decode x-rays of the two-sided panels.

Researchers have harnessed the power of artificial intelligence to decode x-ray images of the Ghent Altarpiece, the 15th-century masterpiece by brothers Hubert van Eyck and Jan van Eyck at the St. Bavo Cathedral in Belgium.

Being able to read the x-rays can help identify damage to the painting by showing areas where varnish or overpainting hides cracks, paint loss, or other structural issues. The scans can also teach researchers about the artists’ working methods, revealing the physical structure of the canvas or panel and its supports, as well as the different layers of paint used in its creation.

But because the Ghent Altarpiece’s panels are double sided, it has been difficult to parse the x-ray images. A newly developed algorithm has allowed scientists to deconstruct the data to create two distinct images.

“This approach demonstrates that artificial intelligence-oriented techniques—powered by deep learning—can be used to potentially solve challenges arising in art investigation,” said Miguel Rodrigues of the electronic and electrical engineering department at the University College London in a statement.

The Ghent Altarpiece, front and back. The missing panel was stolen almost 100 years ago. Photo by D. Provost (closed Ghent Altarpiece) and H. Maertens (open Ghent Altarpiece); courtesy of Saint-Bavo’s Cathedral, Art in Flanders.

The 12-panel polyptych painting, titled The Adoration of the Mystic Lamb and completed in 1432, has been undergoing restoration at Belgium’s Royal Institute for Cultural Heritage since 2012. As part of that work, the piece was subject to hyperspectral imaging, macro x-ray fluorescence scanning, and imaging x-ray radiography, as well as high-resolution photography in both the visible and infrared spectrums.

The study’s findings, published last week in the journal Science Advances, take a closer look at the outermost upper panels of Adam and Eve, which feature two interiors from the Annunciation scene when the altarpiece is folded closed. As a 2-D representation of a 3-D object, x-rays flatten out a variety of information, including the physical make-up of the work, making it difficult to create a clear image of the paintings on either side.

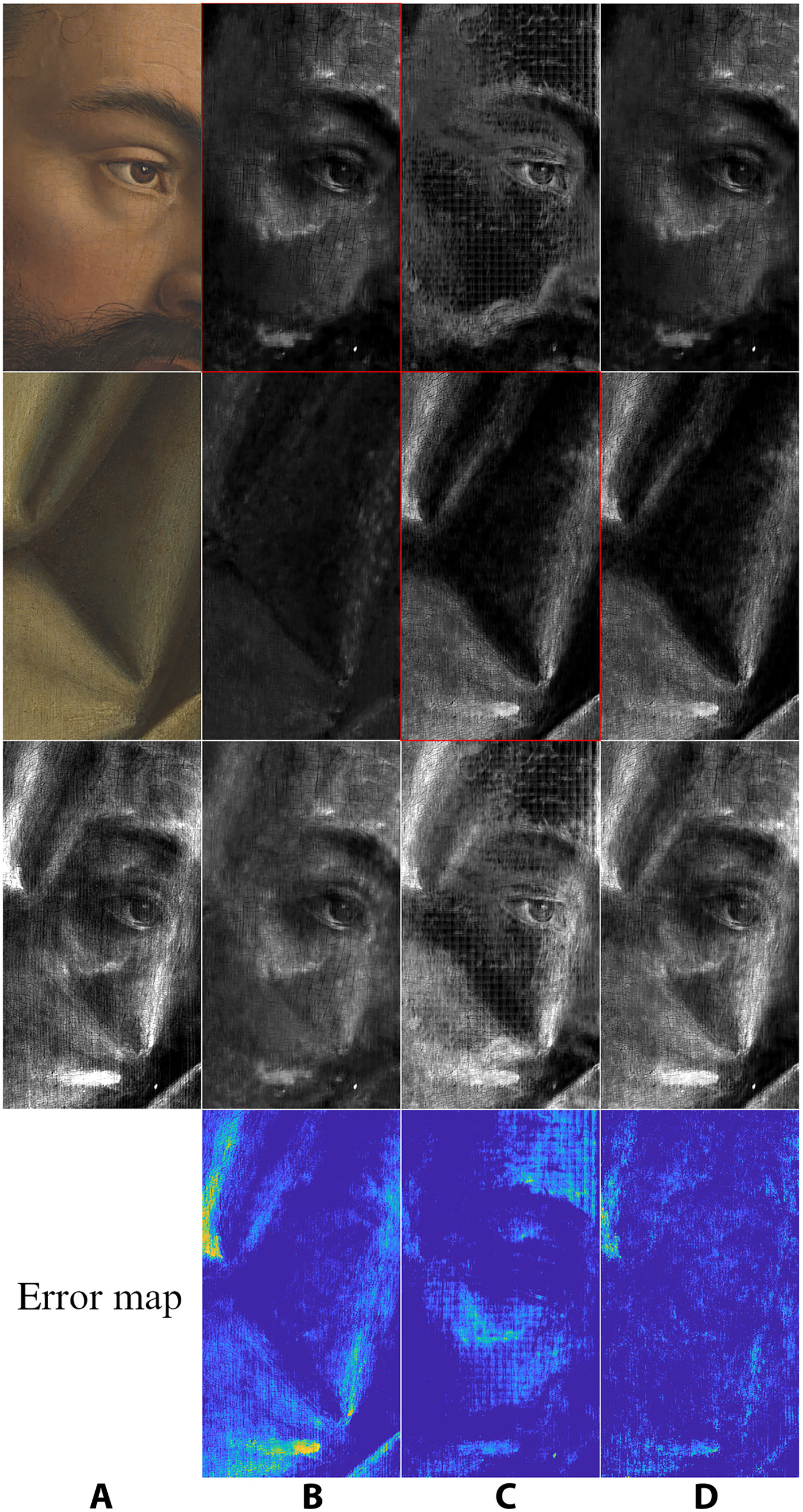

The algorithm interpreting the x-rays of a detail of Adam on the Ghent Altarpiece. The first two rows show the unmixed x-rays separating the two sides, while the third row shows the original x-ray of the full panel. Courtesy of KIK-IRPA.

To better interpret this information, scientists turned to a convolutional neural network–based self-supervised framework, training a deep neural network with color photographs of the back and front of the paints, as well as the x-ray of the entire panel. As a result, the AI was able to develop an algorithm to reconstruct x-ray images of both sides of the panel, separating the x-ray of the two-sided paintings into two distinct x-ray images.

The new method has only been used on two of the altarpiece’s panels so far, but is expected to assist in the further study of the famous work. Moving forward, it could also make it easier to identify covered-up compositions on reused canvases, or changes an artist made during a work’s creation.