Every Monday morning, artnet News brings you The Gray Market. The column decodes important stories from the previous week—and offers unparalleled insight into the inner workings of the art industry in the process.

After a holiday-shortened week, two stories about the gap between headline-grabbing numbers and their actual meaning…

The Metropolitan Museum of Art, New York. Photo: ANGELA WEISS/AFP/Getty Images.

MET NEUTRALITY

On Thursday, the Metropolitan Museum of Art released the attendance figures for its 2018 fiscal year—a standard administrative procedure that can’t avoid being interpreted as a pseudo-referendum on its controversial decision to begin charging mandatory admission to a wide swath of non-NYC residents as of March 1. And although the museum was understandably proud of the results, we should be careful when judging how valuable they are in answering the questions that matter most about visitorship.

To recap, the museum reported a grand total of 7.35 million visitors across its three locations (Fifth Avenue, the Met Breuer, and the Cloisters) in the 2018 fiscal year (which, for the Met, runs from July 1 to June 30). This total represents a roughly five percent increase over the seven million total visitors it welcomed in 2017—and more importantly, an all-time record for the museum during a single fiscal year.

Still, before we all transform the Met’s infamous David H. Koch fountains into celebratory Gatorade baths, there are a couple of points worth surfacing here.

First, the new admission policy only came into force during the final four months of the Met’s fiscal year. And since the museum strictly released annual attendance figures, not monthly attendance figures, the data are too broad to say much about what effect the new ticketing rules did (or didn’t) actually have on visitorship once they were in place.

In fact, this would be true even if we did have monthly attendance data. Why? Because the admissions policy wasn’t the single, solitary variable separating March 1, 2018 from all that came before. The Met also cycled its exhibitions. Competing institutions elsewhere in the city did, too. Most importantly, the world as a whole turned, creating innumerable differences between the public’s viewing plans during the period in question.

So how do we separate the effect of the ticket hike alone from this mosh pit of variables?

Well, we kinda can’t. At least, not with what we have.

Instead, the most I would personally feel comfortable concluding from the attendance report is this: The new admission fees did not prevent the Met from besting last year’s visitor figures.

Which legitimately deserves to be celebrated… but only to a point.

“Michelangelo: Divine Draftsman and Designer” at the Metropolitan Museum of Art. Photo courtesy Drew Angerer/Getty Images.

All sides of the admissions policy debate ostensibly want at least one of the same things: namely, for more people to see more art at the Met. The museum’s 2018 attendance data say that happened.

But this shared objective leads to the second, much larger caveat that needs to be addressed. Unless I’ve completely missed the plot, the giant ladle stirring the cauldron in the museum admission debate isn’t how many total people are visiting. It’s who those visitors are—in particular, from the standpoints of race/ethnicity and economic class.

The Met’s annual attendance data tells us nothing about these demographics. The reason I’m flagging this detail is that it connects to what, in my view, matters most about museum attendance—and how best to evaluate it.

To me, measuring absolute gains or losses in museum visitorship is secondary. It’s a little like trying to judge the health of a polluted pond by recording how many fish are swimming around inside, while leaving aside the curious detail that most of them now have multiple heads and breathe fire.

If we’re concerned about the ethnic and economic diversity of museum audiences, then, we would need to review data that slice total attendance by ethnicity and economic class. Even more to the point, as cultural analytics guru Colleen Dilenschneider has written, we would need to compare the year-on-year changes in the Met’s demographic data to the same year-on-year changes in the general population.

Why? So we could identify whether the museum’s outreach trends are keeping pace with trends in humanity as whole, not just attracting even more well-off white people.

Bottom line: The Met did not supply those data points. (To be clear, it never has, making this year’s report no different than last year’s or any others.)

I’m not trying to indict the museum for it. Nor am I implying that the institution is hiding anything, especially since this kind of data would be difficult (and uncomfortable) to collect every time someone walked through the front doors.

But I am pointing out that, when it comes to the question of how the museum’s ticketing policy is affecting visitorship, my personal jury is still out. And if you’re waiting for good evidence, yours probably should be, too.

[Metropolitan Museum of Art]

“Measuring the Magic of Mutual Gaze,” by Marina Abramovic, Suzanne Dikker, Matthias Oostrik. ©Marina Abramovic, 2011. Photo by Maxim Lubimov, Garage Center for Contemporary Culture.

MIND ON MY MONEY & MONEY ON MY MIND

On Monday, my colleague Rachel Corbett reported on a new neuroscience study that suggests artists may value money differently than professionals in fields generally deemed non-creative. But based on a close look at the research and the wider context, I think the results are being widely misinterpreted—and deserve a healthy dose of skepticism anyway.

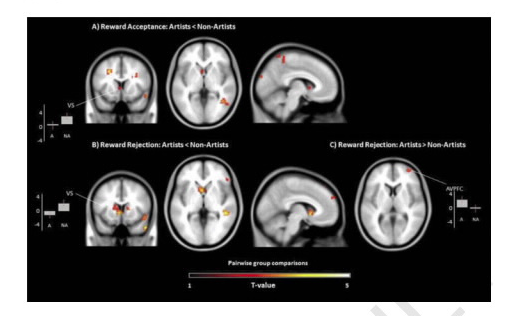

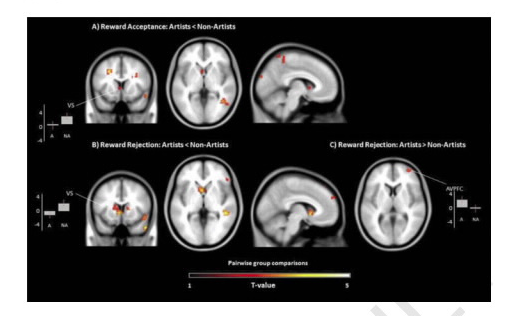

Under the provocative headline “Why Are Artists Poor? New Research Suggests It Could Be Hardwired into Their Brain Chemistry,” Corbett relayed that functional magnetic resonance imaging (fMRI) of subjects who worked as “actors, painters, sculptors, musicians, or photographers” showed less activity in the brain’s reward centers than people who worked in “non-creative” roles when given an opportunity to win small amounts of money in a laboratory setting—a finding that suggests cash carries less allure for creative types than it does for most people.

Now, I spend a lot of time thinking about artists, art professionals, and their relationship to cash. I know from our trusty site traffic monitor in the artnet newsroom that a lot of people read this story. I also know from the @ replies to artnet’s Twitter and Instagram that plenty of those people had strong feelings about it.

The most prominent sentiment seemed to be that the study (and artnet, by proxy) were blaming the true victims in this situation—namely, artists—by suggesting that they are destined to be poor because something may be inherently wrong with them, not because of external factors like a non-meritocratic gallery system or the proliferation of deadbeat clients and dealers.

But this is not what the study’s results suggested. Instead, the researchers simply concluded that artists “are less inclined to react to the acceptance of monetary rewards” than non-artists—meaning, in effect, that the artists in the sample prioritized cash less than normies when making certain practical decisions.

Which… duh? In fact, short of proposing that it might not be advisable on a first date to go beast mode on a full slab of ribs, I’m having a hard time imagining a less controversial statement than that one—especially to artists themselves. After all, if they didn’t find a higher value in pursuing creative goals than making money, they would just be content to sink into stable, boring jobs like the rest of us rather than braving the many risks, uncertainties, and injustices of life as an artist.

Brain scans looking at the dopaminergic reward system of artists and non-artists in the new study “Reactivity of the Reward System in Artists During Acceptance and Rejection of Monetary Rewards” in the Creativity Research Journal.

But if you still think this study’s conclusions are bogus, consider this: It was based on the brain images of just 24 people: 12 artists and 12 non-creatives. Two dozen people! That’s it!

To be clear, this is not to say that the neuroscientists’ findings are crap. But it is to say—as Corbett implies—that 24 subjects divided into two groups of 12 generally does not add up to a statistically significant sample.

Here’s a ridiculous true-life test case to show what I mean: I visited a friend of mine in Minneapolis about two years ago, and I found out when I arrived at my hotel that it would also be headquartering a convention of furries for the weekend. For the sheltered, furries are a group of people with a fetish for sexual hijinks performed in the types of fuzzy animal costumes often worn by college mascots.

(Side note: I fully recognize it sounds HIGHLY SUSPICIOUS that I just so happened to spontaneously wander into the center of this super-self-selecting bacchanal, but what can I say? The randomness of the universe is real!)

Now, I’m willing to bet that, if I’d interviewed them, I could have found groups of 12 furries that all, say, worked in retail, or preferred nonfiction to fiction, or liked savory foods more than sweet ones.

But would that necessarily mean there was a causal link between any of those choices and fantasizing about burning through the Kama Sutra with the San Diego Chicken? No, even if I collected brain scans for support. Correlation is not causation.

This leads us to another point. Even though the study in question was published in a peer-reviewed journal, not all peer-reviewed journals are created equal. By either the h-Index or SCImago’s Journal Rankings—both of which attempt to quantify the influence of specific academic publications—this study’s venue, the Creativity Research Journal, doesn’t even make the top 1,000.

More broadly, a 2017 study in the peer-reviewed journal PLOS Biology found that more than half(!) of published findings in psychology and cognitive neuroscience that have been “deemed to be statistically significant are likely to be false” based on their inability to be consistently replicated by other researchers. Even more relevant (emphasis mine), “cognitive neuroscience studies”—like the one in question here—“had higher false report probability than psychology studies, due to smaller sample sizes.”

And like that, we come full circle.

Again, I don’t find the grand conclusion of this study to be scandalous. Even if I did, I would still have major questions about its methodology. But since artists are keen to avoid financial hardship in an über-challenging (and often highly unfair) industry, the real takeaways here are that it’s almost always good business to read the fine print and consider the source.

[artnet News]

That’s all for this week. ‘Til next time, remember one-time British Prime Minister Benjamin Disraeli, who famously said, “There are three kinds of lies: lies, damned lies, and statistics.”