Every week, Artnet News brings you The Gray Market. The column decodes important stories from the previous week—and offers unparalleled insight into the inner workings of the art industry in the process.

This week, back on the A.I. beat…

CHATTING UP A STORM

Has the inescapable barrage of stories about A.I.-powered text-to-image generators pummeled you into paralysis yet? Well, what I’m about to say next might send some of you reeling regardless. Despite all the legal chaos and creative potential already unleashed by DALL-E, Midjourney, and other algorithms that transform typed prompts into increasingly high-fidelity visuals, their consequences for contemporary life are dwarfed by those from an application largely absent from the art discourse to date.

That algorithm is ChatGPT, an A.I.-powered chatbot that is now knocking industry after industry off its axis. The art world should certainly prepare itself for the same treatment. But our niche ecosystem also might be the perfect lens to reveal the less-discussed weaknesses in this groundbreaking technology.

ChatGPT comes to us from none other than OpenAI, the same well-funded Silicon Valley startup responsible for DALL-E. I’ve written about OpenAI’s history and potential for overreach before, so I won’t drag regular readers through those waters again. Instead, I’ll focus on what differentiates ChatGPT and other A.I.-powered chatbots from text-to-image generators, and why the former class of algorithm is drumming up even more drama than the latter.

In technical terms, ChatGPT is what’s called a Large Language Model (LLM). The basic idea is that the algorithm swallows a gargantuan amount of text-based information scoured from the web and, through time and repetition, learns to dissect how words connect to each other, to facts, and to abstract concepts. This aptitude then allows the algorithm to generate coherent new texts of varying size and complexity from short-form written prompts. (Fundamentally, this is the same process used by text-to-image generators, which are trained by extreme repetition to dissect how words connect to images. It’s just that the inputs and outputs are different.)

This brute-force education has, by mid-January 2023, empowered LLMs to do all kinds of things with surprising proficiency based on text prompts with even modestly informed parameters: write emails or essays, summarize books, translate foreign languages, even write computer code.

The key to Large Language Models is the “large” part. Saying that LLMs drink from the firehose of the internet is orders of magnitude too conservative. It would be more accurate to say that they are designed to devour the online galaxy. The most robust LLMs are now trained “at the petabyte scale,” per TechCrunch. Unless you’re pretty deep into software development or data storage (or at least numerical prefixes), you’re almost certainly underestimating how much data that really is.

A petabyte, for the uninitiated, is 1 million gigabytes. One gigabyte is roughly equivalent to 230 songs in MP3 format, according to tech website MakeUseOf. This means a chatbot trained on a petabyte’s worth of data has consumed the equivalent of about 230 million songs (and multiples of that for every successive petabyte of training info). If we say for argument’s sake that the average song lasts three minutes, then it would take just over 1,300 years—that’s one and one-third millennia—before an immortal D.J. spinning through a one-petabyte music library would return to the top of the playlist.

That is the magnitude of processing we’re talking about when it comes to major LLMs already… and they’re only getting bigger, better funded, and more skillful. They’re also getting more pervasive—and creating one existential crisis after another in the process.

EVERYTHING, EVERYWHERE, ALL AT ONCE

Fewer and fewer areas of contemporary life seem to be protected from the influence of A.I-powered chatbots. In the U.S. education system, institutions ranging from middle schools to graduate schools are now upending their curricula, codes of ethics, and network permissions to try to counteract their students’ use of ChatGPT to hack their assignments. Twitter is swamped with threads about people’s experience using chatbots to write local news stories from transcripts of city council meetings, develop customized nutrition plans, and more. By some accounts, ChatGPT is already a standard part of many professionals’ workdays:

The example above skews heavily toward software development and other tech-fluent (or at least tech-adjacent) fields. (Qureshi works in health research at Peter Thiel’s Palantir.) But given how much of our daily experience is now being shaped by exactly those fields, ChatGPT’s penetration into the groundwater still signifies a shift with consequences for nearly everyone.

Does that include the art world? Absolutely. Images may be central to this industry, but text is the main tool wielded to give all those images meaning, context, and marketing support. That fact positions ChatGPT and its competitors to impact the art business just as much as almost any other.

The most obvious entry point for the technology is generating light-lift art scholarship. For years, Tate and other museums have been crowdsourcing the writing of artist bios and other publicly available educational content through Wikipedia. It’s hard for me to imagine that institutions would unanimously ignore A.I.-powered chatbots’ potential to do the same kind of work even faster, especially as the tech continues improving.

For-profit art businesses could leverage the tech just as easily. Think of all the press-release copy that no one wants to write, whether for gallery exhibitions, corporate news announcements, or just the “about” pages on thousands of websites. Auction-catalog essays might be generated in a fraction of the time, and at a fraction of the cost, as they have been with human writers. A fair number of art-media news stories could be ChatGPT’d with no more loss in quality than news stories about other trades or general-interest subjects.

But as close readers might have suspected based on the hedging I dropped into that last paragraph, our niche industry also shows where A.I.-powered chatbots’ value peters out. I would even go so far as to say that artwork is the ultimate stress test for this technology.

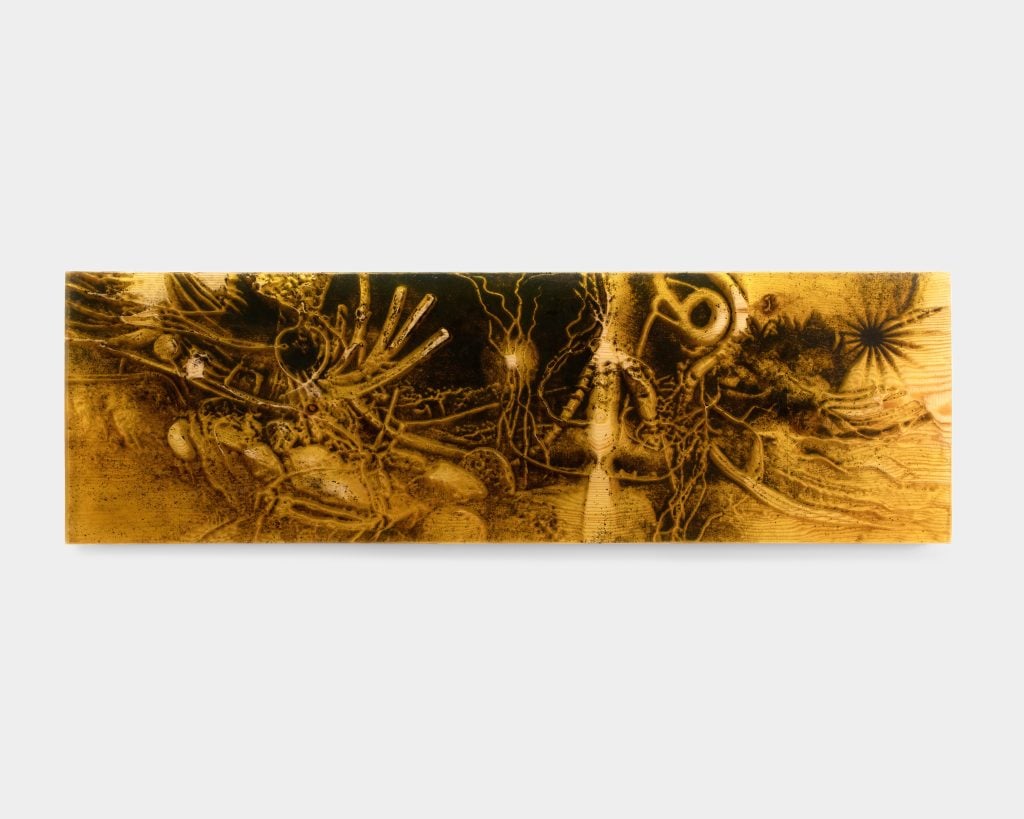

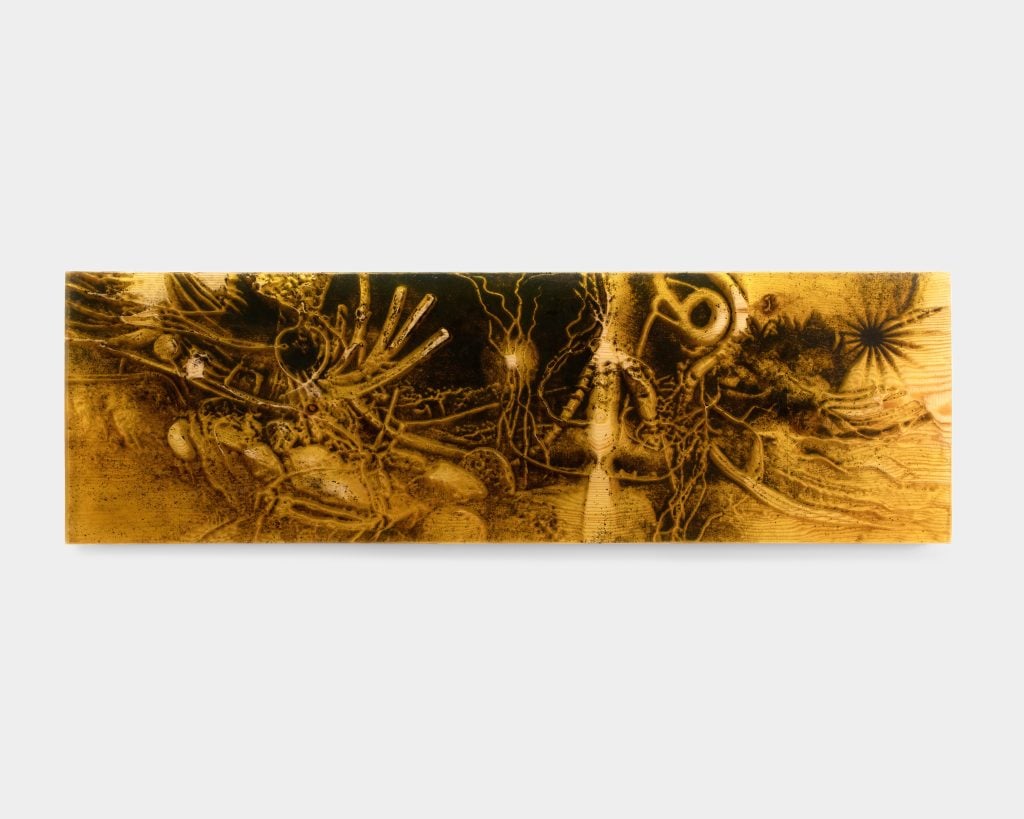

Jura Shust, Walking, knocking on the roots, and shaking the spruce paws #7 (2023). Courtesy of Management.

LIMITS OF LANGUAGE

If you’re an elder millennial like myself, let alone someone even closer to decrepitude, you know that chatbots have been around for decades. (By most accounts, the first one, called ELIZA, was developed in 1966 at MIT.) Artists have been engaging with them for almost as long. That goes for both producing actual works (see: early adopters like Lynn Hershman Leeson and her 1999–2002 chatbot-based landmark Agent Ruby) and the type of ancillary text material necessary to play the contemporary art game.

To the latter point, it was around 2010 that software-fluent artists started launching algorithms capable of auto-generating artist statements from a handful of user-supplied keywords. Examples include the Artist Statement Generator 2000, ArtyBollocks, and 500 Letters, all of which are still around today.

ChatGPT is orders of magnitude more advanced than these earlier examples. The more interesting point, however, is that ChatGPT may not be all that much better at this specific task. In fact, even that description may overestimate its value when it comes to many artists and studio practices.

Remember, ChatGPT and its ilk depend on large amounts of data. The more information there is about a given subject online, the more capably chatbots can generate sentences about them. This means chatbots could be powerful tools for automating texts about individual artists and movements that have been written about a lot, as well as emerging or largely unknown artists whose practices fit relatively neatly into styles or themes that have been written about a lot.

But the better an artist is at blazing an unconventional path, the less even the current generation of chatbots will understand what to do with them until, or unless, other humans produce a rich body of text around their practice.

“Whenever you’re working with something that’s more than the sum of its references, ChatGPT becomes completely useless,” said Anton Svyatsky, owner of Management gallery in New York.

Svyatsky is the rare dealer who has spent years navigating the labyrinths of advanced technology, first as a freelance tech professional, then as a curator and gallerist. Several of the artists in Management’s program today wrestle with issues stemming from the ways that tech has warped our lives, our self-concepts, and our understanding of the world, past and present.

No surprise, artificial intelligence has leapt to the forefront lately. As of my writing, Management’s current show is “Coniferous Succession,” a solo exhibition by Belarusian-born, Berlin-based Jura Shust, who collides mythology and technology to illuminate both subjects.

At the core of “Coniferous Succession” are the forest myths and rituals developed by early inhabitants of what is now Eastern Europe. The show includes a sound installation describing these long-ago cultures’ beliefs, passed on through oral tradition, about the symbiotic relationship between humans and spruce trees. Shust then used these vocals as prompts for a text-to-image generator, which produced fantastical renderings of foliage that were then machined into pine panels whose depressions he filled with soil and “fossilized” with resin.

“Jura is referencing a mythology that doesn’t have a written record, so it’s a highly specialized field of knowledge, and he’s using that to generate highly original images,” Svyatsky said. The resulting works find new meaning by exploiting the gaps in the algorithm’s comprehension; it creates alien visions of something like foliage precisely because its source material is so niche.

This tension clarifies the ceiling on the value of A.I.-powered chatbots to the art world. In theory, it should be well equipped to spin out a competent-enough wall placard about the life of Picasso, an entry-level essay on Impressionism, or an auction house’s catalogue text lauding the allegedly unparalleled vision of a major consignor. There is more than enough fodder of those kinds available online for the machine to eat, digest, and extrude something similar but new with reasonable success.

Installation view of Jura Shust, “Coniferous Succession.” Photo by installshots.art. Courtesy of Management.

The same is true for artist statements or press releases for gallery shows by emerging artists creating middle-of-the-road gestural abstractions, identity-based figurative paintings, neon text art with minimum viable cleverness, or a number of other familiar types of works discussed in equally familiar terms. The hard truth is that the majority of art made in the world, and the majority of text churned out about it, is relatively generic. To believe otherwise is to fall victim to what theorist Margaret Boden called the “superhuman-human fallacy”: dismissing A.I. because algorithms engineered to write text or produce images can’t reach the apex of human performance yet ignores the fact that most humans that write text or produce images can’t reach the apex of human performance, either.

The creative ceiling on chatbots may partly come from their developers’ back-end efforts to prevent these internet-chugging algorithms from “[going] off on bigoted rants” triggered by the vast online cesspools in their diets, as writer Jay Caspian Kang pointed out. Kang notes that one Twitter user “ran the ChatGPT through the Pew Research Center’s political-typology quiz and found that it, somewhat unsurprisingly, rated as an “establishment liberal,” probably the demographic most likely to use it, as well as the demographic most desirable for OpenAI to sell products, services, and (maybe, someday) ads to. Which also gears the chatbot to manufacture mid content in a mid voice for a mostly mid consumer base.

That might be a good business strategy for a Silicon Valley startup with grand ambitions. But it’s not such a great move for anyone hoping to make an impact in the art world.

“A lot of time galleries like mine would be asking writers to write press releases or essays for one of two reasons: one, because the practice of the artist resonates with the practice of the writer, and you get a synergistic result; two, to attract the audience of a particular writer,” Svyatsky said. “You’re stacking the deck, so to speak.” In high-end cases, an art business can also commission a particular writer as a flex, similar to the motivation for commissioning a star curator to organize an actual show.

Put it all together, and the likeliest outcome is that most uses of ChatGPT will be driven by cost-cutting and efficiency-seeking, both inside and outside the arts. Ironically, it could change the business on the macro level of hiring and time-management precisely because, so far, it can’t do much to change the business on the micro level of writing genuinely revelatory texts on its own. The upper limit could elevate in the future, as developers chase the grand goal of creating artificial general intelligence, the type of thinking, feeling machine theoretically capable of insights beyond human comprehension. But if or when that gateway opens, its impact on the art world might be the least of our concerns.

That’s all for this edition. ‘Til next time, remember: there’s always another level to strive for.

Follow Artnet News on Facebook:

Want to stay ahead of the art world? Subscribe to our newsletter to get the breaking news, eye-opening interviews, and incisive critical takes that drive the conversation forward.